It wasn’t until COVID-19 shut down the Krieger Hall lab of Leyla Isik that she discovered the 2010 BBC hit show Sherlock. But instead of binge-watching television to stave off pandemic boredom, Isik, the Clare Boothe Luce Assistant Professor in the Department of Cognitive Science, used it to advance her study of social vision.

Isik’s social vision research focuses on understanding how humans distill social information about others from what we see. “It’s just a ubiquitous thing that we’re always doing… You can’t stop looking at people and thinking about them,” Isik says. “Large portions of your brain are dedicated to recognizing these abilities, but we still don’t really understand how you do this.”

Before the pandemic, Isik’s team hosted study participants for electroencephalography and functional MRI experiments that monitored brain activity. Much of their—and others’—research involved showing participants controlled stimuli. Stimuli such as videos of moving shapes, rather than more natural stimuli like television shows.

So much social cognition research is done with very simple, lab-based tasks. It’s just such a far cry from the real world.”

—Leyla Isik, assistant professor

Natural stimuli versus social interactions

Isik had experience using natural stimuli. Her postdoctoral project involved participants watching a movie. However, the complications of these types of studies drew her away for a while.

“The problem with just showing people movies in the scanner is that [movies] are not designed as experimental stimuli,” she says. “It’s very hard to know if any effect you’re observing is due to the thing you’re interested in or some other highly correlated factor.”

Isik turned to natural stimuli again when her lab closed and her team was unable to perform in-person experiments. She accessed a public data set from the lab of Janice Chen. Chen is an assistant professor in the Department of Psychological and Brain Sciences. It showed how the brains of neurotypical adults responded when they watched the first episode of Sherlock.

They needed to overcome the lack of controls in this natural stimulus. Isik and her team expanded on the data set by watching the episode themselves and labeling scenes with a variety of social features. This includes whether characters were interacting, or whether a character was thinking about another character.

Then, controlling for other factors, Isik and her team used machine-learning analysis to identify whether—and how—participants’ brains responded to those different social features. “We weren’t even sure you would see a [brain] response to social interactions in movies,” Isik says. “But there was a good portion of the brain [particularly the superior temporal sulcus] that seemed to be responding only to the social interactions.”

Another study, in which Isik’s team labeled a data set from the United Kingdom that featured adults watching the movie 500 Days of Summer, produced identical findings, she says.

Isolating variables in natural stimuli

The work made two important contributions to the field, Isik says. The research showed it was possible to isolate individual variables in natural stimuli. This is an important methodological tool for future study of social vision in movies and television shows. The study also helped settle the debate about whether, and where, social interactions were processed in the brain. “I’m particularly excited about this line of research,” Isik says. “It brings us closer to understanding social vision in real-world scenarios.”

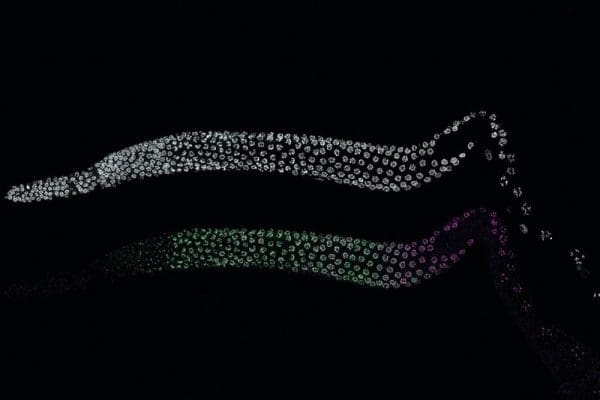

As the field of social vision continues to move forward—and become more realistic—researchers inch closer to potential real-world applications for their work, Isik says. It could help engineers advance artificial intelligence, such as technology for self-driving cars, to better recognize how people interact. As for medical applications, Isik and her team are beginning a project that will study how autism affects the neural responses to natural stimuli.